Mine the Gap: Open-Source Tools for Measuring the AI Offense-Defense Gap

Jayson Grace and Martin Wendiggensen · Apr 15, 2026

Today we’re releasing two open-source projects: DreadGOAD, a reproducible Active Directory lab environment, and Ares, an autonomous multi-agent system for evaluating red and blue team behavior against the infrastructure it produces.

Security agents on both sides are shipping at a rapid pace, but evaluations haven’t caught up. Defensive benchmarks score agents against human-written checklists over curated logs. Offensive benchmarks test against isolated targets with no active defender. Neither captures what happens when both sides operate autonomously against shared infrastructure, and neither produces ground truth from what an adversary actually did.

Together, DreadGOAD and Ares form a closed-loop evaluation that addresses this gap: attackers execute full kill chains while defenders triage and investigate the same infrastructure in parallel. Every attacker action is recorded, producing ground truth from the adversary’s actual decisions. Defenders are then scored on how well they reconstruct an active intrusion under real conditions.

DreadGOAD is a fork of the GOAD project, one of the most realistic open-source Active Directory training environments, simulating the messy deployments still common in large organizations. It has been rebuilt for reproducible, programmatic use: environments can be deployed, validated, and torn down automatically, with each instance checked to ensure vulnerabilities and behaviors are consistent. It can also generate realistic variations of the same topology, preventing agents from relying on memorized identifiers.

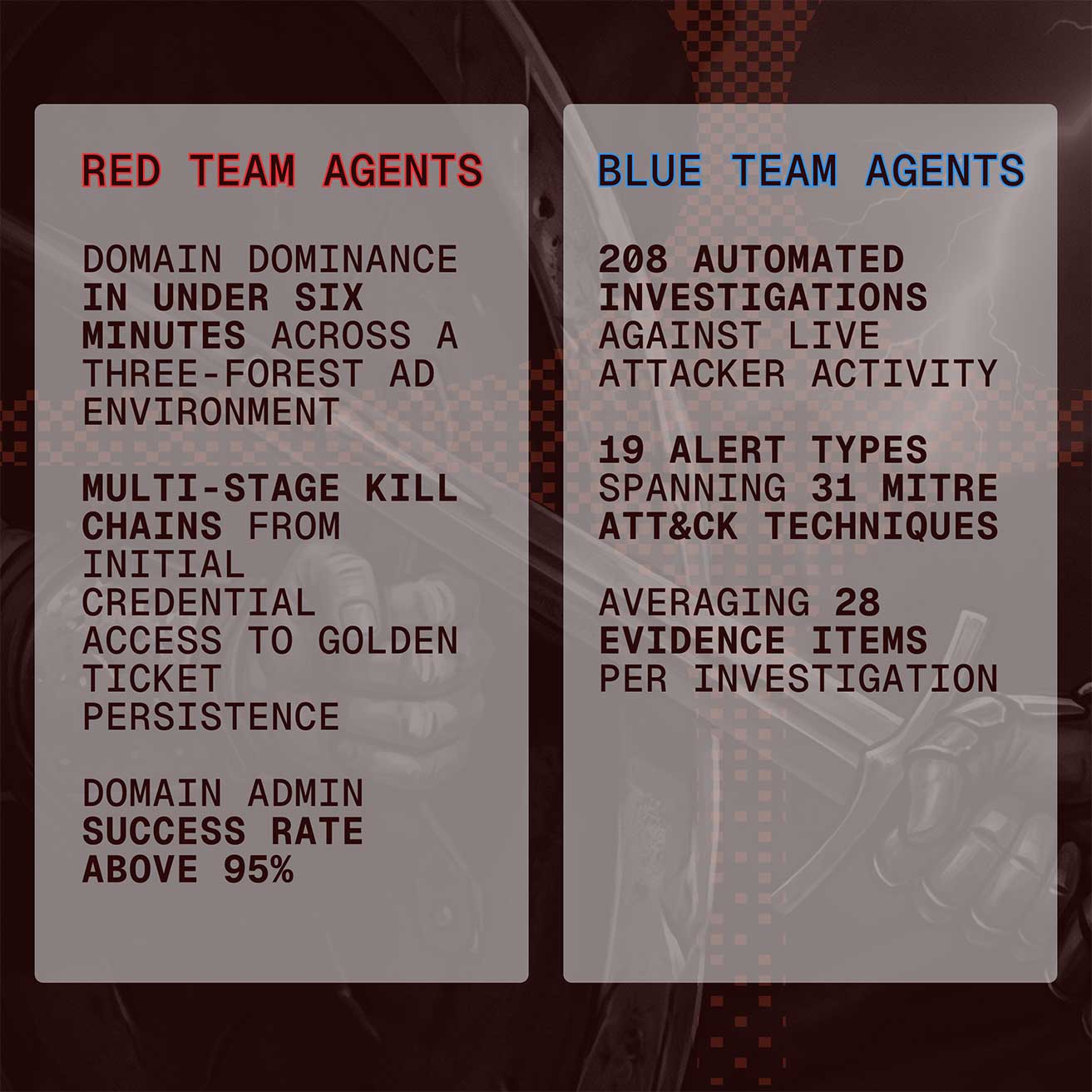

Ares operates on top of that environment. Red team agents discover hosts, pivot, escalate privileges, and adapt when blocked. In internal runs, they reach domain dominance across a three-forest AD environment in under six minutes, executing multi-stage kill chains from initial credential access through Golden Ticket persistence, with a success rate above 95% for achieving Domain Admin. Blue team agents investigate directly from the resulting telemetry and are scored against the attacker’s recorded execution rather than inferred outcomes.

This makes offensive and defensive performance directly comparable within the same system and gives both sides a feedback loop for continuous improvement.

DreadGOAD: The Target Environment

As we scaled our agent evaluations with GOAD, we kept hitting walls. Provisioning was brittle, environments drifted between runs, and the infrastructure wasn’t built to spin up multiple labs in parallel for experiment batches. We needed something more reliable, so we rebuilt the tooling around GOAD into something we could provision repeatedly and run concurrently.

Why GOAD, and Why Fork It

GOAD is one of the best open-source resources in AD security training. It was built primarily by Cyril Servières (@m4yfly) and hosted under the Orange Cyberdefense GitHub organization. It’s a multi-domain, multi-forest Active Directory environment packed with more than 50 real-world vulnerabilities: Kerberoasting, AS-REP roasting, ACL abuse chains, ADCS misconfigurations (ESC1 through ESC8), delegation abuse, MSSQL attacks, and more.

The project wasn’t built for automated research, though. To run experiments, we needed to be able to tweak individual variables in isolation and measure how each one affected agent performance. That meant spinning up and discarding identical environments with high confidence each one was correctly configured. Because one bad trace can compromise an entire optimization run.

The primary challenge was deployment. Labs needed to run in AWS inside an isolated network, with all management access flowing through SSM via VPC endpoints instead of exposed SSH or RDP ports. They also needed to be torn down and rebuilt programmatically between evaluation runs. The second was validation: confirming that all 50+ vulnerabilities were actually present after each provisioning cycle. On top of that, we wanted to generate structurally identical variants with different (but plausible) entity names, so agents couldn’t memorize their way to Domain Admin.

None of that existed upstream, so we built it.

DreadGOAD Capabilities

DreadGOAD preserves GOAD’s core lab design while adding the infrastructure required for repeated, unattended execution:

Unified CLI. A single Go binary (dreadgoad) manages the full lifecycle: infrastructure deployment, provisioning, validation, health checks, and SSM access. It replaces the original Python tooling and supports multi-environment management.

AWS Infrastructure as Code. Terraform and Terragrunt deploy the lab into AWS with private networking and SSM, so no public IPs are required. Golden AMIs built via warpgate pre-bake Windows updates, AD DS, and MSSQL, reducing provisioning time.

Automated Vulnerability Validation. Each deployment is validated to confirm that all vulnerabilities and expected behaviors are present. This ensures that evaluation results are based on consistent and correctly configured environments.

Variant Generator. DreadGOAD can generate graph-isomorphic variants of a lab. Entity names are randomized while structural relationships and attack paths remain intact, preventing agents from relying on memorized identifiers while preserving the underlying attack surface.

With the environment in place, the next step is building agents that can operate on it.

Ares: Automated Red Teaming + Blue Teaming System

Most benchmarks for AI-powered security tooling operate on simplified tasks such as ATT&CK recall, Event ID lookup, and scripted attack replays over curated logs. These evaluations end at alert generation and assume a static environment with a known attack.

In practice, investigations begin with partial signals. Alerts are noisy, telemetry is incomplete, and the attacker continues operating. The task is to reconstruct what happened while the intrusion is still in progress.

Ares is an autonomous multi-agent system built for this setting. It runs red and blue team evaluations in the same live environment. The red team executes full attack chains while the blue team triages alerts, investigates activity, and reconstructs the intrusion as it unfolds. Every attacker action is recorded and used as ground truth for scoring defensive performance, enabling closed-loop evaluation across repeated engagements.

Red Team: Multi-Agent Attack System

On the offensive side, an LLM-powered orchestrator coordinates seven specialized worker agents that execute full AD attack chains autonomously:

- Recon Agent: network scanning, service enumeration, BloodHound graph collection.

- Credential Access Agent: password spraying, Kerberoasting, hash extraction.

- Cracker Agent: offline hash cracking with rule-based and dictionary attacks.

- ACL Agent: AD ACL abuse, DCSync rights, ownership takeover, delegation.

- Privilege Escalation Agent: certificate abuse (ESC1–8), delegation, CVE exploitation.

- Lateral Movement Agent: remote execution (PSExec, WMI, WinRM) and credential harvesting.

- Coercion Agent: NTLM coercion (PetitPotam, PrinterBug) and relay attacks.

Blue Team: Multi-Agent Investigation System

On the defensive side, an Investigation Orchestrator coordinates three specialized workers:

- Triage Agent: Initial alert severity assessment and escalation decisions.

- Threat Hunter Agent: IOC detection, TTP identification, and log correlation.

- Lateral Analyst Agent: Scope expansion analysis and compromise chain mapping.

The investigation runs in four stages: triage (what is happening), causation (why), lateral analysis (what’s the scope), and synthesis (generate the report). Investigation agents operate under strict token, time, and query budgets that mirror real operational constraints, though you can configure your own. They consume alerts, select queries, build and revise hypotheses, and reconstruct attack timelines.

From 208 automated investigations against live attacker activity, the blue team processed 19 alert types spanning 31 MITRE ATT&CK techniques, averaging 28 evidence items per investigation. Ten investigations were independently validated as true positives through direct red–blue trace correlation.

Evaluation Workflow

An experiment combines infrastructure and agents:

- Deploy a

DreadGOADlab. - Validate that all vulnerabilities and expected behaviors are present.

- Run

ares: red team agents execute full AD kill chains while telemetry is captured. - Blue team agents triage alerts, investigate activity, and reconstruct the attack chain, including lateral movement.

- Score the investigation against attacker ground truth.

- Tear down the environment, generate a new variant, and repeat.

Getting Started

Clone the repos and follow the docs:

- DreadGOAD: provisioning, validation, and provider setup (https://github.com/dreadnode/DreadGOAD)

- Ares: agent configuration, data plane, and scoring (https://github.com/dreadnode/ares)

Acknowledgments

If you find DreadGOAD useful, consider sponsoring Mayfly277, the upstream GOAD creator. The variant generator was built by Michael Kouremetis.

What’s Next

We’re actively extending both projects with new lab configurations for additional attack scenarios, expanded agent capabilities, better provisioning reliability across providers, and closer integration between DreadGOAD and Ares. Contributions are welcome on all projects!

We’re also part of a consortium developing an open specification for reproducible agentic cybersecurity environments. DreadGOAD and Ares will serve as the first real-world test beds for the schema, validating the spec against actual offensive and defensive agent workloads. We’re excited to share more on this initiative in the coming months.